Chat with RTX - a first glimpse

Hello my graphic card, I wanted to ask you a question

I've lived to see interesting times where on my laptop with RTX I can not only see what graphic wonders the gamedev industry can squeeze out of integrated circuits but also chat with my resources. However strange it sounds...

Chat with RTX is new software from Nvidia (published mid-Feb), thanks to which we can harness our graphics card for "serious" tasks like answering where my notes file is on the topic of this and that. And all of this happens privately, on your hardware, using the Tensor RT, which we enrich with knowledge about our resources.

Installation

The installation file takes up 35GB and requires Windows and an Nvidia card from the 30/40 series with RTX on board.

The installed application takes up less than 30GB.

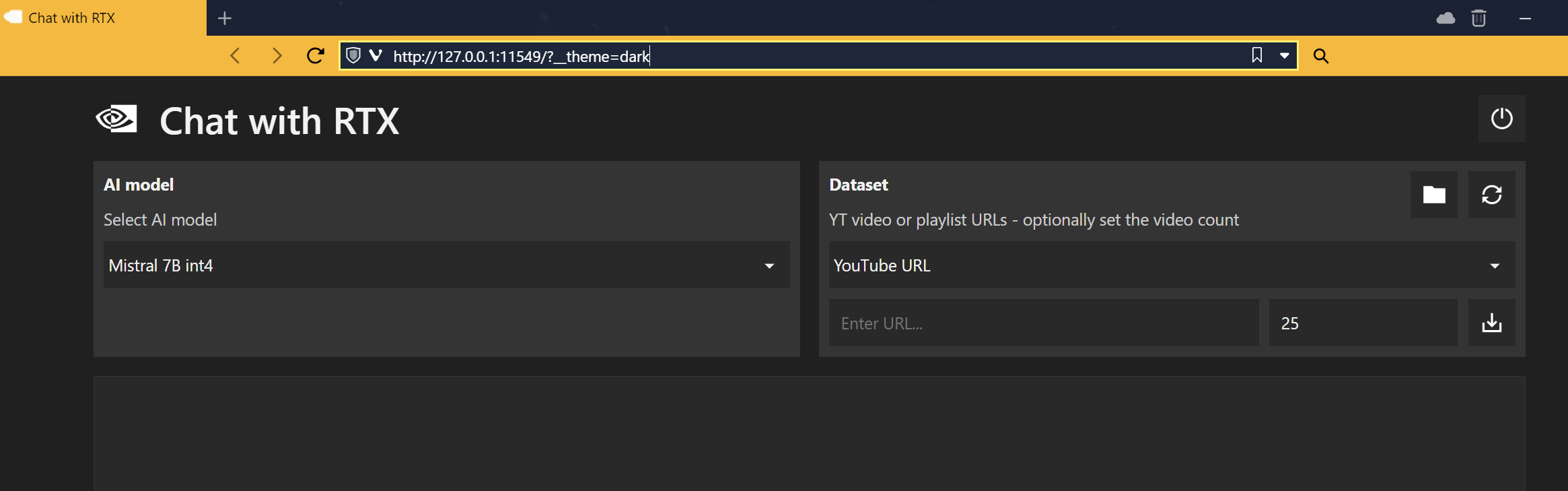

After starting, a terminal with a running server will open, which we connect to

via localhost - on my machine it's on port 11549. I have one model available,

Mistral 7B int4.

As a Dataset, we can specify a local folder (supported formats are pdf, doc, and txt)

or a YouTube URL.

Summarizing a YouTube video

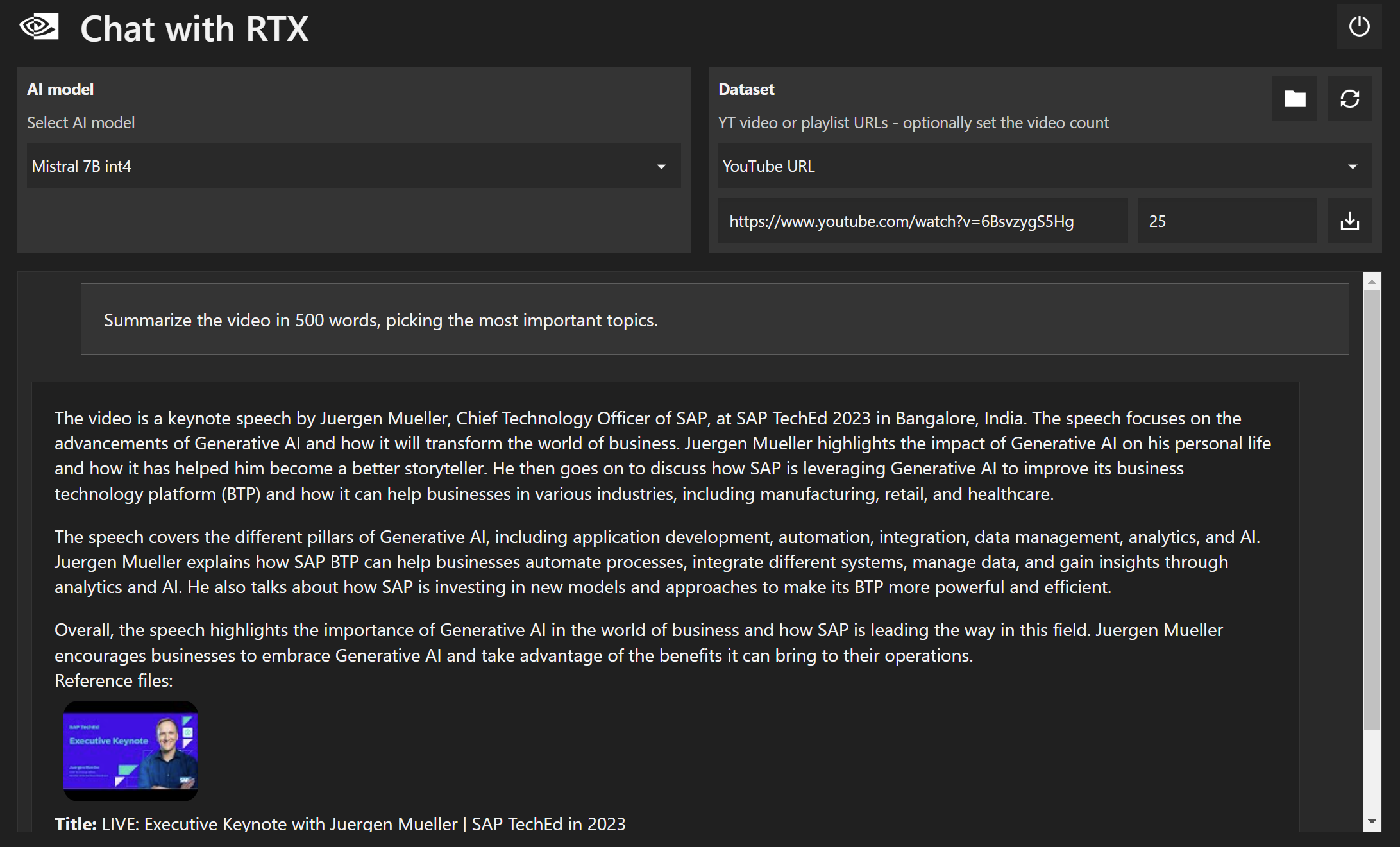

So, I'm doing the first test asking for a summary in 500 keynote slides from SAP Tech Ed 2023:

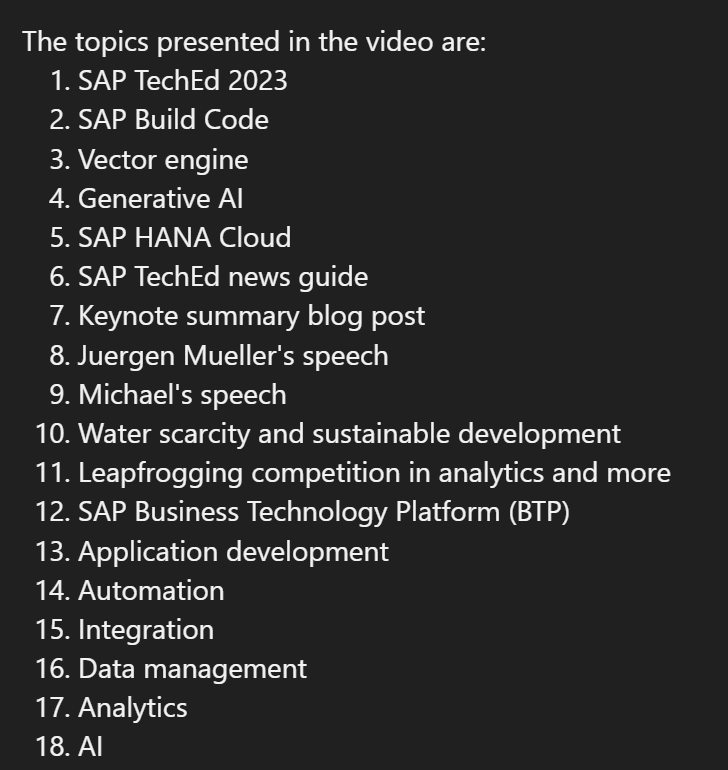

OK, for the summary, AI chose one main topic discussed in the keynote - AI :D. But hey, there was more. I did another test asking for a summary in 2000 words - this time I got more topics - AI and SAP Analytics Cloud. However, other topics are still omitted, which in my opinion should be included in such a summary. Another prompt is a direct request for ALL topics covered in the keynote:

Well, great, we have all listed. Now let's ask what Joule is?

Finally - will SAP HANA get the Vector Engine and if so - when will it be available?

I didn't mean it's being used internally - just when it will be available for customers.

Asked differently:

Alright. The prompt matters, in this case I feel like adding external before clients guided towards the goal, because the context for this statement is as follows:

Asking about knowledge from local files

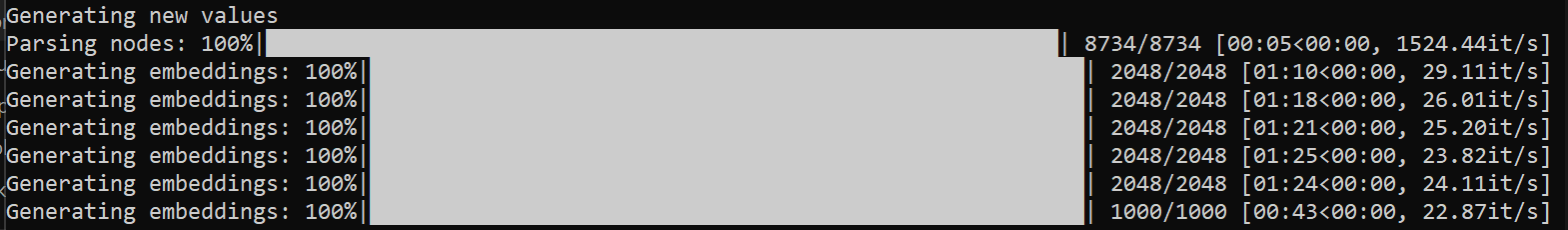

Alright, enough talking about videos. It's time for some meat in the form of queries in the context of my files. I point to a directory with my purchased PDFs from SAP Press (about 2GB) and the "knowledge acquisition" process begins:

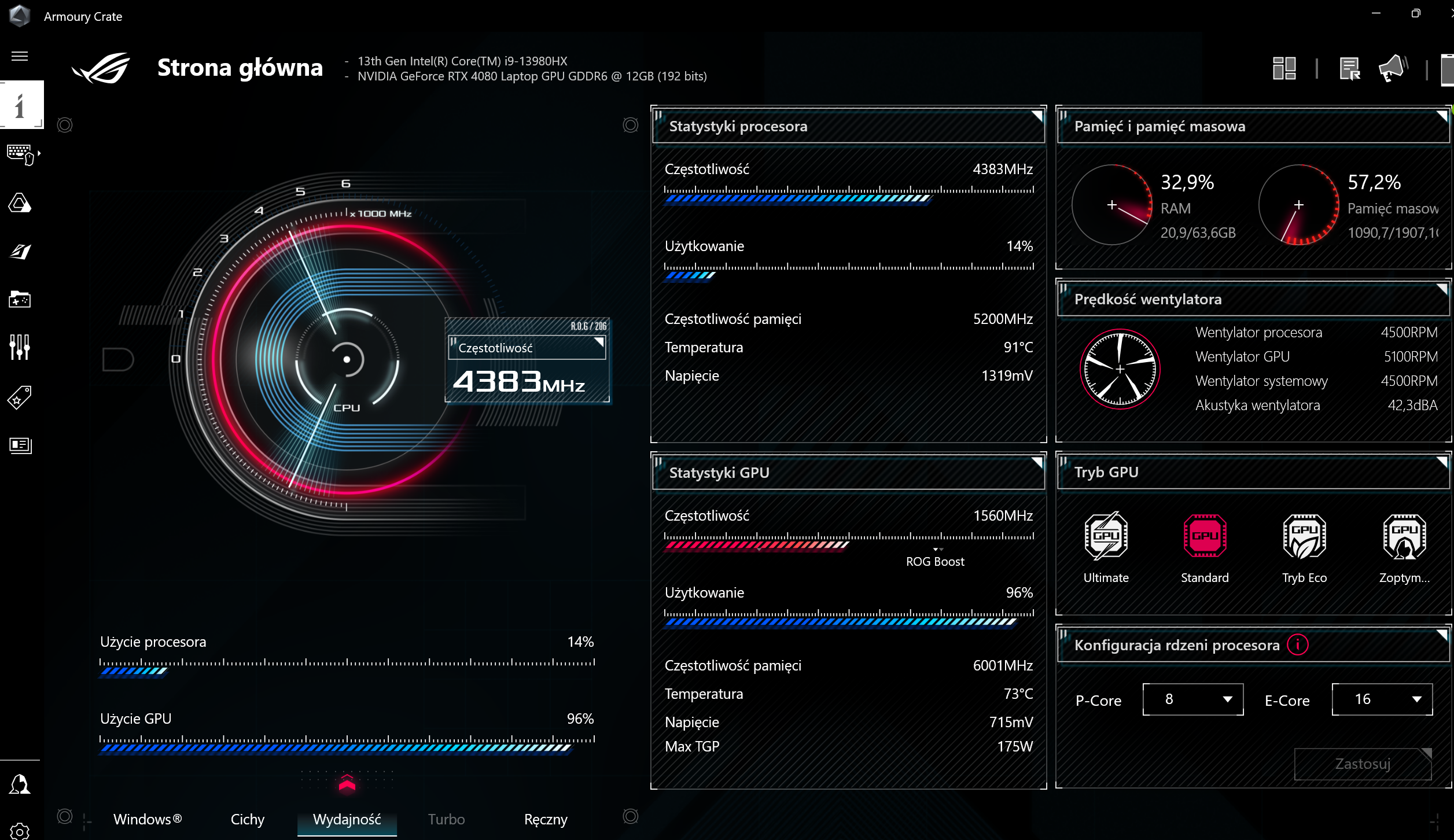

I have the "Performance" mode set, during embedding generation the GPU works at full capacity:

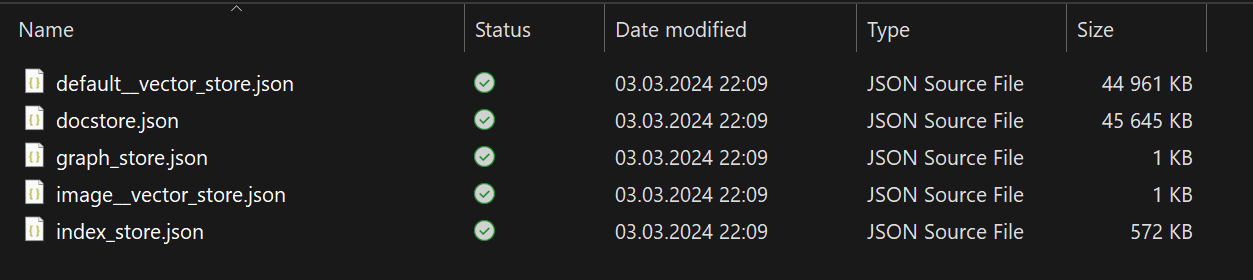

Generating on my configuration (Intel Core i9 + RTX 4080 (12GB DDR6) + 64GB RAM) took approx. 12 minutes, as a result, alongside the original directory, we have an additional one with "local knowledge" (about 100MB):

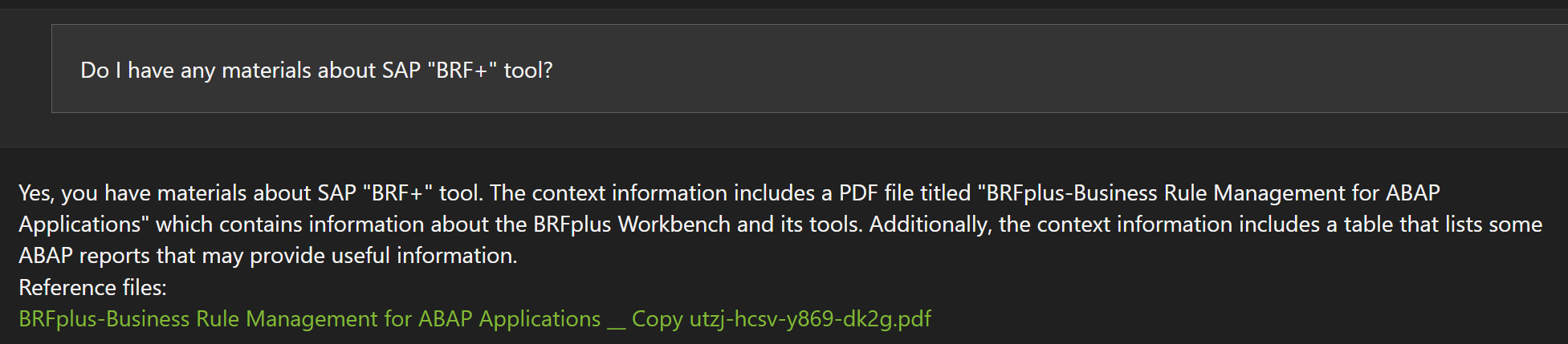

Then I ask if I have any materials on the SAP tool "BRF+":

I get a good answer. Now a more specific query - I have information in several books on how to register a service in SAP Gateway:

The model selects the book, where there is a very short paragraph titled

Register the OData Service - which seems OK, but my internal censor said that

the book OData and SAP NetWeaver Gateway should be chosen as the main and more reliable source.

Let's see another question and answer:

Again - I would expect more information to be pointed out from the book OData and SAP NetWeaver Gateway.

The guiding prompt to this source already did the job:

Summary

I feel like the first shots at the chat missed my personal expectations a bit - yet still the answers were valid. But, firstly - I'm not a prompt master, and secondly - Nvidia's software is quite fresh and already looks promising. Searching through a bunch of files to extract some specific information, making a summary about given topic - cool.

From my developer's point of view - I wonder if there will be a local version of the model, which would handle source files of various programming languages - it doesn't even have to be as advanced as CoPilot etc. Even running simpler prompts for finding information would be nice, instead of dealing with advanced search functionalities in IDEs.